http://graphics.cs.cmu.edu/projects/OF/

Dense Optical Flow Prediction from a Static Image

Presented at ICCV, 2015

People

...

Abstract

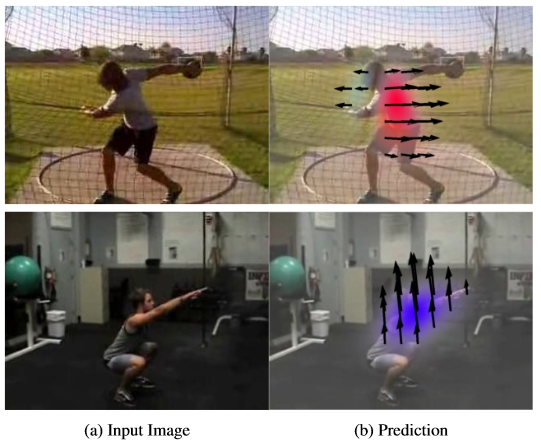

Given a scene, what is going to move, and in what direction will it move? Such a question could be considered a non-semantic form of action prediction. In this work, we present a convolutional neural network (CNN) based approach for motion prediction. Given a static image, this CNN predicts the future motion of each and every pixel in the image in terms of optical flow. Our CNN model leverages the data in tens of thousands of realistic videos to train our model. Our method relies on absolutely no human labeling and is able to predict motion based on the context of the scene. Because our CNN model makes no assumptions about the underlying scene, it can predict future optical flow on a diverse set of scenarios. We outperform all previous approaches by large margins.

Videos

|

Here is the ICCV spotlight for our paper. |

Paper

|

Jacob Walker, Abhinav Gupta, and Martial Hebert, Dense Optical Flow Prediction from a Static Scene, In International Conference on Computer Vision (2015). [Paper (2.4MB)] [Poster (11.4MB)] |

BibTeX

@inproceedings{@inproceedings{of_iccv2015,

author="Jacob Walker and Abhinav Gupta and Martial Hebert",

title="Dense Optical Flow Prediction from a Static Image",

booktitle="International Conference on Computer Vision",

year="2015"

}

Code

Code (Hosted on Github) Trained Model

Funding

This research is supported by:

- NSF IIS-1227495

Comments, questions to Jacob Walker